When we work on a project, we like working with short-lived feature branches in the form of small pull requests. PRs are great, but sometimes it can be hard for other people to see the changes you're about to merge. To make testing more manageable, we've been using Docker containers as preview containers.

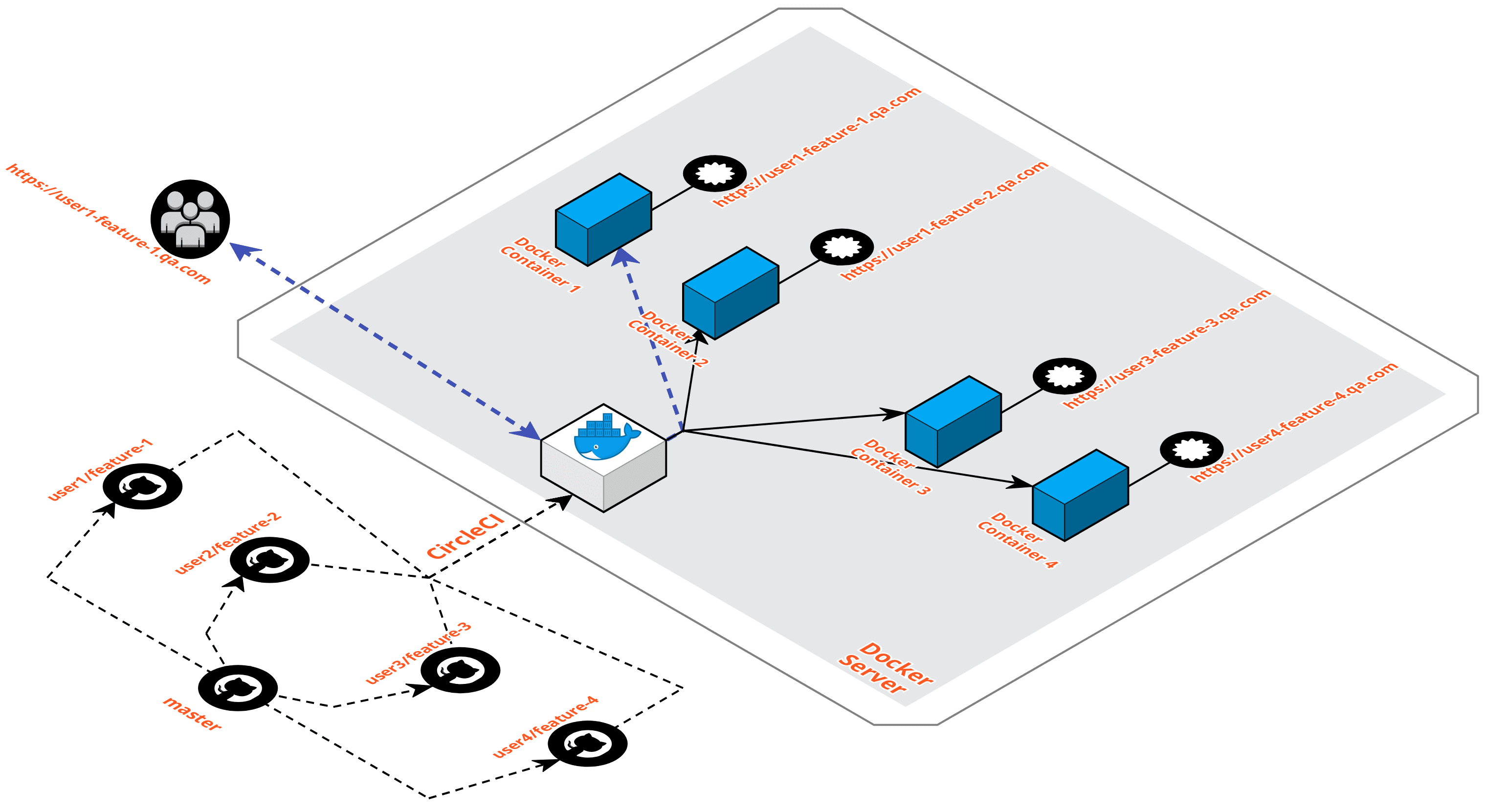

Architecture

This architecture is made of:

- CircleCI to build Docker images for the previews;

- a server to run the containers;

- some DNS configuration to map a subdomain to a particular container;

- GitHub PRs to get deployment notifications with urls for each preview.

We figured out this architecture after trying a couple of different approaches. We tried Dokku and Docker Compose and failed: they were too complex... we just need disposable app containers that easy to create and destroy.

We ended up running all the services the app needs inside a single container. This is actually a bad practice in Docker terms, but it's rock solid compared to running multiple containers for each open PR.

Containerization

To run everything in a single container, we've created the nebulab/baywatch repository where we store every combination of tools and services we need.

If you take a look at the baywatch source, you'll see we have a

ton of different combinations of tools and services. It's pretty basic: the

Dockerfile installs all the requirements and the entrypoint.sh is used

to run multiple services with a single command.

We use these images as the base image for the Dockerfile that runs the

application. An example could be:

FROM nebulab/baywatch:ruby-2-4-redis-postgres

ENV APP_HOME=/preview \

RAILS_SERVE_STATIC_FILES=true

RUN mkdir $APP_HOME

WORKDIR $APP_HOME

ADD . $APP_HOME

COPY dump.sql /dump.sql

RUN gem install bundler && bundle check --path vendor/bundle || bundle install --path vendor/bundle

RUN /entrypoint.sh && \

su --command 'createuser --superuser root' postgres && \

bundle exec rake db:create && \

bundle exec rails dbconsole < /dump.sql && \

bundle exec rails db:migrate && \

bundle exec rake assets:precompile && \

service postgresql stop

RUN echo "#!/bin/bash" >> /rails-entrypoint.sh

RUN echo "/entrypoint.sh" >> /rails-entrypoint.sh

RUN echo "bundle exec sidekiq -c 1 -d -L log/sidekiq.log" >> /rails-entrypoint.sh

RUN echo "bundle exec rails server --port 3000 --binding 0.0.0.0" >> /rails-entrypoint.sh

RUN chmod +x /rails-entrypoint.sh

EXPOSE 3000

ENTRYPOINT ["/rails-entrypoint.sh"]

The above example is something we would use for a Ruby on Rails application that uses Ruby 2.4, PostgreSQL, Redis and Sidekiq.

Three things are worth noticing:

RAILS_SERVE_STATIC_FILES=true: preview containers do not receive a lot of traffic, we can serve assets directly with Ruby on Rails;COPY dump.sql /dump.sql: we load data for the preview app from an external database dump;ENTRYPOINT ["/rails-entrypoint.sh"]: we use an entrypoint that runs both services and application-specific commands.

Docker Server

We run all these containerized apps on a bare metal server (it's cheaper and more powerful than a cloud server) of size and power directly related to the number of Docker containers we want to run simultaneously.

The server is configured with:

- nginx-proxy: this routes a particular subdomain to a particular container;

- docker-letsencrypt-nginx-proxy-companion: this will generate valid certificates for every running container.

Install instructions for both are available on the projects' README. The only

extra configuration we added is --restart always so that the containers will

be restarted in the unlikely event they go down.

The other server related configuration is the DNS: the qa.com server needs to

be targeted by a *.qa.com CNAME so that subdomain requests land on the server

where the containers are running (nginx-proxy handles the rest).

It's not hard to configure a server like this but, to make it even easier, everything can be configured with the docker-preview-server cookbook which is a Chef cookbook that configures the server automatically.

CircleCI

We then use a CI server (we like CircleCI, but any CI should work) to build the Docker containers and tell them the preview server to run them.

Here is the CircleCI job configuration we use (this only runs for branches with a PR open):

jobs:

preview:

docker:

- image: circleci/ruby

steps:

- checkout

- run:

name: Set Feature Branch Name

command: |

echo "export SUBDOMAIN=`echo $CIRCLE_BRANCH | sed \"s/[^[:alnum:]]/-/g\"`" > $CIRCLE_BUILD_NUM

echo "export CONTAINER=$CIRCLE_PROJECT_REPONAME-\$SUBDOMAIN" >> $CIRCLE_BUILD_NUM

echo "export DIRECTORY=$CIRCLE_PROJECT_REPONAME/\$SUBDOMAIN" >> $CIRCLE_BUILD_NUM

- run:

name: Notify Preview Deploy Started

command: |

source $CIRCLE_BUILD_NUM

curl -X POST -H "Content-Type: application/json" -H "Accept: application/vnd.github.ant-man-preview+json" \

"https://api.github.com/repos/nebulab/awesomeprj/deployments?access_token=<token>" \

-d '{ "ref": "'$CIRCLE_SHA1'", "auto_merge": false, "required_contexts": [], "environment": "preview", "transient_environment": true }' | \

grep '"id"' | head -1 | awk '{gsub(/,/,""); print $2}' > github_deploy_id

- run:

name: Upload Application To Docker Server

command: |

source $CIRCLE_BUILD_NUM

ssh [email protected] "rm -fr $DIRECTORY && mkdir -p $DIRECTORY"

tar c . | ssh [email protected] "tar xC $DIRECTORY"

- run:

name: Run Docker Container On Docker Server

command: |

source $CIRCLE_BUILD_NUM

ssh [email protected] "\

docker rm -f $CONTAINER; \

docker run -d --restart always --name $CONTAINER \

-e VIRTUAL_HOST=$SUBDOMAIN.qa.com \

-e APP_HOSTNAME=$SUBDOMAIN.qa.com \

$CONTAINER"

- run:

name: Notify Preview Deploy Complete

command: |

source $CIRCLE_BUILD_NUM

curl -X POST -H "Content-Type: application/json" -H "Accept: application/vnd.github.ant-man-preview+json" \

"https://api.github.com/repos/nebulab/awesomeprj/deployments/`cat github_deploy_id`/statuses?access_token=<token>" \

-d '{ "state": "success", "environment_url": "http://'$SUBDOMAIN'.qa.com/", "environment": "preview"'

Some parts of the configuration were removed for simplicity. Here is what is happening:

- CircleCI has the application code;

- GitHub is notified that a deploy has started for that particular branch;

- CircleCI has ssh access to the preview server;

- application code is uploaded to the preview server;

- Docker image is built;

- Docker container is run;

- GitHub is notified the deploy URL once the container is ready.

This configuration can be completely streamlined by using the feature-branch-preview Orb we released to make the configuration more manageable. The Orb also generates Let's Encrypt certificates automatically so that your preview containers will have a valid SSL certificate.

Your CircleCI configuration when using the Orb will be something like this:

jobs:

- feature-branch/preview:

domain: qa.com

github_repo: nebulab/awesomeprj

github_token: <token>

letsencrypt_email: <email-for-ssl-cert>

server: qa.com

user: ubuntu

Results

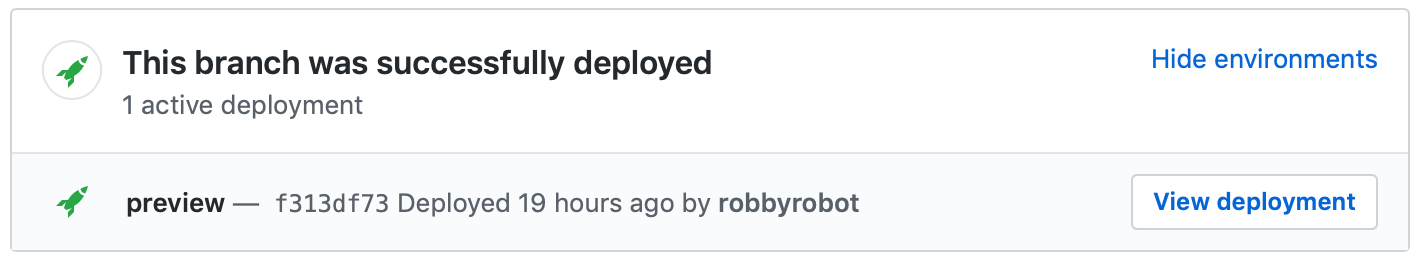

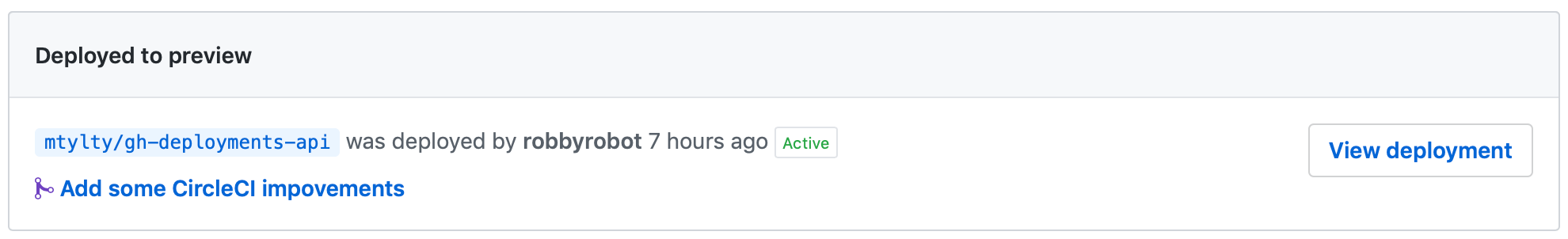

Once everything is configured this way pull requests will start showing deploys links to subdomains that can be easily visited by both clients and developers to check the status of a particular PR:

You can also visit the environments section of your repository to get the latest deployed branch:

Conclusions

This way of using Docker completely changed the way we work: easy and fast QA can happen as soon as PR is made and translates in faster and safer PR merges.

One downside? It's complex and has many moving parts but, once it's ready, it works flawlessly and only breaks if the preview server runs out of resources.

Got questions or suggestions? Leave a comment!